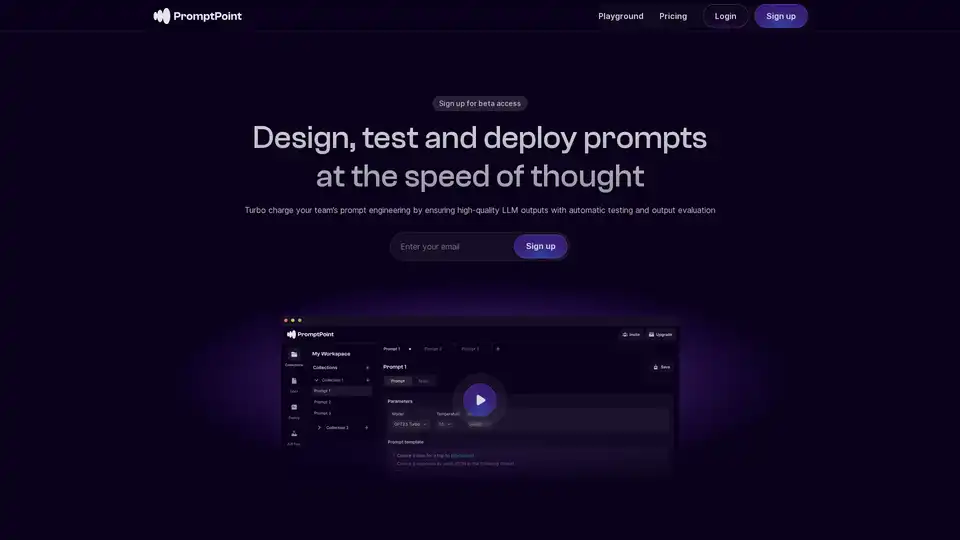

PromptPoint

Overview of PromptPoint

PromptPoint: Design, Test, and Deploy Prompts at the Speed of Thought

PromptPoint is a platform designed to streamline the process of prompt engineering for Large Language Models (LLMs). It enables teams to design, test, and deploy prompts efficiently, ensuring high-quality outputs through automated testing and evaluation.

What is PromptPoint?

PromptPoint is a no-code platform that allows users to design, test, and deploy prompts for LLMs. It helps to bridge the gap between technical execution and real-world relevance, making prompt engineering accessible to everyone on a team, not just engineers. It provides tools to create, organize, version, deploy, and monitor the usage of prompts.

How does PromptPoint work?

PromptPoint makes prompt engineering more efficient and predictable through the following ways:

- Prompt Creation and Organization: Offers a seamless environment for designing and organizing prompts, including templating and saving configurations.

- Automated Testing and Evaluation: Enables users to run automated tests and receive comprehensive results quickly, saving time and increasing efficiency.

- Version Control and Deployment: Allows users to structure prompt configurations with precision and deploy them instantly for use in software applications.

- Collaboration: Promotes collaboration among team members, allowing non-engineers to contribute to the prompt engineering process.

Key Features of PromptPoint

- No-Code Platform: Empowers non-engineers to write and test prompt configurations.

- LLM Flexibility: Seamlessly connects with hundreds of large language models.

- Automated Testing: Ensures high-quality LLM outputs with automatic testing and output evaluation.

- Collaboration: Facilitates team collaboration, bridging the gap between technical execution and real-world relevance.

- Prompt Organization: Templates, saves, and organizes prompt configurations.

Who is PromptPoint for?

PromptPoint is ideal for:

- Teams looking to leverage LLMs effectively.

- Organizations seeking to streamline their prompt engineering process.

- Non-technical team members who want to contribute to prompt design.

- Businesses that require high-quality and reliable LLM outputs.

Why Choose PromptPoint?

- Efficiency: Automate tests and get comprehensive results in seconds.

- Collaboration: Enable your entire team to participate in prompt engineering.

- Flexibility: Connect with hundreds of large language models.

- Accessibility: The no-code platform makes prompt engineering accessible to non-engineers.

- Quality: Ensure high-quality LLM outputs with automatic testing and output evaluation.

How to use PromptPoint?

- Sign up for beta access.

- Create and organize your prompts using the platform's tools.

- Run automated tests and evaluate the results.

- Version, deploy, and monitor usage in your software applications.

Get Started with PromptPoint

Ready to turbo-charge your prompt design? Sign up for PromptPoint today and start building LLM-powered applications with your whole team.

Best Alternative Tools to "PromptPoint"

Train, manage, and evaluate custom large language models (LLMs) fast and efficiently on Entry Point AI with no code required.

Parea AI is the ultimate experimentation and human annotation platform for AI teams, enabling seamless LLM evaluation, prompt testing, and production deployment to build reliable AI applications.

Maxim AI is an end-to-end evaluation and observability platform that helps teams ship AI agents reliably and 5x faster with comprehensive testing, monitoring, and quality assurance tools.

Weco AI automates machine learning experiments using AIDE ML technology, optimizing ML pipelines through AI-driven code evaluation and systematic experimentation for improved accuracy and performance metrics.

Future AGI is a unified LLM observability and AI agent evaluation platform that helps enterprises achieve 99% accuracy in AI applications through comprehensive testing, evaluation, and optimization tools.

Agent Zero is an open-source AI framework for building autonomous agents that learn and grow organically. It features multi-agent cooperation, code execution, and customizable tools.

Build task-oriented custom agents for your codebase that perform engineering tasks with high precision powered by intelligence and context from your data. Build agents for use cases like system design, debugging, integration testing, onboarding etc.

Magic Loops is a no-code platform that combines LLMs and code to build professional AI-native apps in minutes. Automate tasks, create custom tools, and explore community apps without any coding skills.

Bolt Foundry provides context engineering tools to make AI behavior predictable and testable, helping you build trustworthy LLM products. Test LLMs like you test code.

Prompt Genie is an AI-powered tool that instantly creates optimized super prompts for LLMs like ChatGPT and Claude, eliminating prompt engineering hassles. Test, save, and share via Chrome extension for 10x better results.

GPT Prompt Lab is a free AI prompt generator that helps content creators craft high-quality prompts for ChatGPT, Gemini, and more from any topic. Generate, test, and optimize prompts for blogs, emails, code, and SEO content in seconds.

Explore the Awesome ChatGPT Prompts repo, a curated collection of prompts to optimize ChatGPT and other LLMs like Claude and Gemini for tasks from writing to coding. Enhance AI interactions with proven examples.

Roo Code is an open-source AI-powered coding assistant for VS Code, featuring AI agents for multi-file editing, debugging, and architecture. It supports various models, ensures privacy, and customizes to your workflow for efficient development.

Compare and share side-by-side prompts with Google's Gemini Pro vs OpenAI's ChatGPT to find the best AI model for your needs.