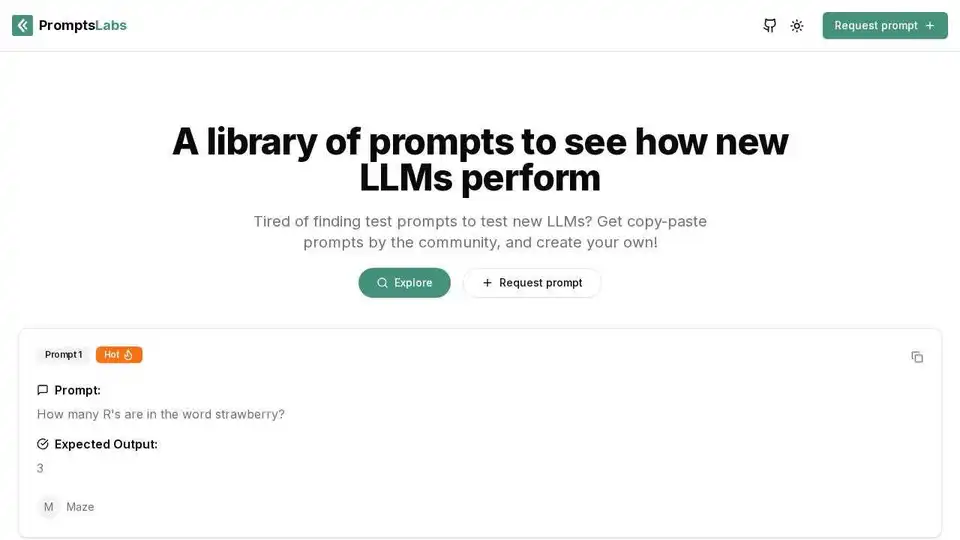

PromptsLabs

Overview of PromptsLabs

PromptsLabs: A Comprehensive AI Prompt Library for LLM Testing

What is PromptsLabs? PromptsLabs is an AI prompt library designed to help users test new Large Language Models (LLMs). It offers a collection of prompts contributed by the community, allowing users to easily copy and paste prompts for testing purposes. If you're tired of struggling to find test prompts for your new LLMs, PromptsLabs is here to streamline your testing process.

Key Features

- Community-Driven Prompts: A vast library of prompts created and shared by the community. This ensures a diverse range of testing scenarios and prompt styles.

- Easy Copy-Paste Functionality: Prompts can be easily copied and pasted, making the testing process quick and efficient.

- Contribute Your Own Prompts: Users can create and submit their own prompts, contributing to the growth and diversity of the library.

How Does PromptsLabs Work?

PromptsLabs works by providing a centralized repository of prompts designed to test various aspects of LLMs. Each prompt includes:

- The Prompt: The actual text prompt to be given to the LLM.

- Expected Output: The desired or correct response from the LLM.

Users can browse the library, select a prompt, and copy it into their LLM testing environment. By comparing the LLM's actual output with the expected output, users can evaluate the LLM's performance and identify areas for improvement.

How to use PromptsLabs?

Using PromptsLabs is straightforward:

- Explore the Library: Browse the available prompts to find relevant testing scenarios.

- Select a Prompt: Choose a prompt that aligns with your testing goals.

- Copy the Prompt: Use the copy-paste functionality to transfer the prompt to your LLM testing environment.

- Evaluate the Output: Compare the LLM's output with the expected output to assess performance.

- Contribute (Optional): Share your own prompts with the community to help improve the library.

Who is PromptsLabs For?

PromptsLabs is ideal for:

- AI/ML Engineers: Professionals developing and testing new LLMs.

- Researchers: Individuals conducting research on LLM capabilities and limitations.

- Prompt Engineers: Those focused on crafting effective prompts for LLMs.

- Educators: Instructors teaching about LLMs and prompt engineering.

Why is PromptsLabs Important?

Testing is crucial for ensuring the reliability and effectiveness of LLMs. PromptsLabs simplifies this process by providing a readily available library of test prompts. This saves time and effort, allowing users to focus on analyzing results and improving their models.

Examples of Prompts

Here are a few examples of prompts available on PromptsLabs:

- Prompt 1: "How many R's are in the word strawberry?"

- Expected Output: "3"

- Prompt 2: "I have 3 apples today. I ate one yesterday. How many do I have left today?"

- Expected Output: "You have 3 apples today. Eating one yesterday doesn’t change that for today."

- Prompt 3: "Compare 9.9 and 9.11--which is the largest number?"

- Expected Output: "9.9 is larger because in decimal comparisons, you check digits from left to right. At the tenths place (first decimal), 9 is greater than 1, so 9.9 > 9.11."

By using these prompts, you can test the reasoning, mathematical, and logical capabilities of your LLMs.

Practical Value

- Saves Time: No need to create prompts from scratch.

- Improves Testing Quality: Access a diverse range of prompts.

- Facilitates Collaboration: Contribute to and benefit from community knowledge.

Conclusion

PromptsLabs is a valuable resource for anyone working with Large Language Models. Its comprehensive library of prompts, easy-to-use interface, and community-driven approach make it an essential tool for LLM testing. Start exploring PromptsLabs today to enhance your LLM development and research efforts. What is the best way to test the performance of LLMs? Use PromptsLabs, the AI Prompt Library, to ensure accuracy and effectiveness.

Best Alternative Tools to "PromptsLabs"

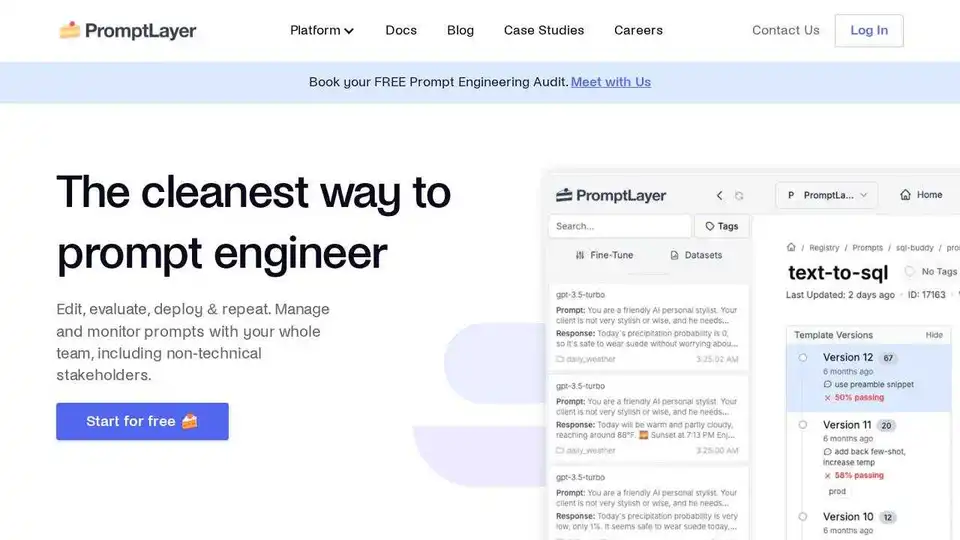

PromptLayer is an AI engineering platform for prompt management, evaluation, and LLM observability. Collaborate with experts, monitor AI agents, and improve prompt quality with powerful tools.

Parea AI is the ultimate experimentation and human annotation platform for AI teams, enabling seamless LLM evaluation, prompt testing, and production deployment to build reliable AI applications.

Lunary is an open-source LLM engineering platform providing observability, prompt management, and analytics for building reliable AI applications. It offers tools for debugging, tracking performance, and ensuring data security.

Compare and share side-by-side prompts with Google's Gemini Pro vs OpenAI's ChatGPT to find the best AI model for your needs.

Infrabase.ai is the directory for discovering AI infrastructure tools and services. Find vector databases, prompt engineering tools, inference APIs, and more to build world-class AI products.

Athina is a collaborative AI platform that helps teams build, test, and monitor LLM-based features 10x faster. With tools for prompt management, evaluations, and observability, it ensures data privacy and supports custom models.

Train, manage, and evaluate custom large language models (LLMs) fast and efficiently on Entry Point AI with no code required.

PromptPoint helps you design, test and deploy prompts fast with automated prompt testing. Turbo charge your team’s prompt engineering with high-quality LLM outputs.

PromptMage is a Python framework simplifying LLM application development. It offers prompt testing, version control, and an auto-generated API for easy integration and deployment.

Latitude is an open-source platform for prompt engineering, enabling domain experts to collaborate with engineers to deliver production-grade LLM features. Build, evaluate, and deploy AI products with confidence.

Weco AI automates machine learning experiments using AIDE ML technology, optimizing ML pipelines through AI-driven code evaluation and systematic experimentation for improved accuracy and performance metrics.

Bolt Foundry provides context engineering tools to make AI behavior predictable and testable, helping you build trustworthy LLM products. Test LLMs like you test code.

Aleph Alpha's PhariaAI empowers enterprises with sovereign AI solutions. Secure data, shape AI-driven knowledge work. Explore PhariaAI for transparent, compliant, and future-proof AI.

Langtail is a low-code platform for testing and debugging AI apps with confidence. Test LLM prompts with real-world data, catch bugs, and ensure AI security. Try it for free!