Whisper

Overview of Whisper

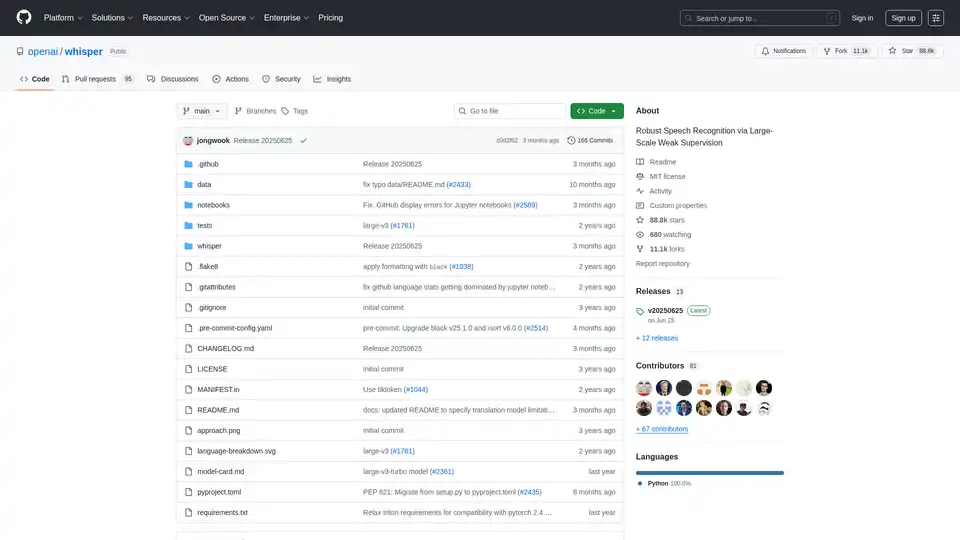

Whisper: Robust Speech Recognition via Large-Scale Weak Supervision

Whisper is a versatile speech recognition model developed by OpenAI, designed for general-purpose use. Trained on a vast and diverse audio dataset, Whisper excels in multilingual speech recognition, speech translation, and language identification, making it a powerful tool for a variety of applications.

What is Whisper?

Whisper is a Transformer sequence-to-sequence model trained on a multitude of speech processing tasks. It consolidates multilingual speech recognition, speech translation, spoken language identification, and voice activity detection into a single model. This is achieved by representing these tasks as a sequence of tokens predicted by the decoder.

How does Whisper work?

At its core, Whisper employs a Transformer-based sequence-to-sequence architecture. This model ingests audio and predicts a sequence of tokens, which can represent various speech-related tasks. The training process involves a multitask format that uses special tokens to specify tasks or classification targets, streamlining the traditional speech-processing pipeline.

Key Features and Capabilities:

- Multilingual Speech Recognition: Accurately transcribes speech in multiple languages.

- Speech Translation: Translates spoken content from one language to another.

- Language Identification: Identifies the language being spoken in an audio clip.

- Voice Activity Detection: Detects the presence or absence of human speech.

How to use Whisper?

Installation:

- Ensure you have Python (3.8-3.11) and PyTorch installed.

- Install the latest version of Whisper using pip:

pip install -U openai-whisper- Alternatively, install directly from the GitHub repository:

pip install git+https://github.com/openai/whisper.git- FFmpeg is also required. Installation instructions are provided for various operating systems in the original document.

Command-Line Usage:

- Transcribe audio files using the

whispercommand:

whisper audio.flac audio.mp3 audio.wav --model turbo- Specify the language for transcription:

whisper japanese.wav --language Japanese- Translate speech into English:

whisper japanese.wav --model medium --language Japanese --task translate- Transcribe audio files using the

Python Usage:

- Use Whisper within Python scripts:

import whisper model = whisper.load_model("turbo") result = model.transcribe("audio.mp3") print(result["text"])

Available Models:

Whisper offers several models with varying sizes and performance characteristics:

| Size | Parameters | English-only model | Multilingual model | Required VRAM | Relative speed |

|---|---|---|---|---|---|

| tiny | 39 M | tiny.en | tiny | ~1 GB | ~10x |

| base | 74 M | base.en | base | ~1 GB | ~7x |

| small | 244 M | small.en | small | ~2 GB | ~4x |

| medium | 769 M | medium.en | medium | ~5 GB | ~2x |

| large | 1550 M | N/A | large | ~10 GB | 1x |

| turbo | 809 M | N/A | turbo | ~6 GB | ~8x |

The .en models are optimized for English-only applications, while the turbo model provides faster transcription speeds with minimal accuracy degradation.

Why choose Whisper?

- Accuracy: Whisper provides state-of-the-art accuracy in speech recognition, leveraging a large and diverse training dataset.

- Versatility: It supports multiple languages and tasks, making it suitable for a wide range of applications.

- Ease of Use: With simple installation and usage, Whisper can be quickly integrated into various projects.

- Open Source: Being open-source, Whisper allows for customization and community-driven improvements.

Who is Whisper for?

Whisper is ideal for:

- Researchers in speech processing and machine learning.

- Developers building applications that require speech recognition or translation.

- Professionals in fields such as transcription, media analysis, and accessibility.

Best way to leverage Whisper?

- Experiment with different model sizes to find the optimal balance between speed and accuracy for your specific use case.

- Utilize the command-line interface for quick transcriptions and translations.

- Integrate Whisper into Python scripts for more complex and customized workflows.

- Explore third-party extensions and integrations to extend Whisper's capabilities.

Conclusion

Whisper is a powerful and versatile tool for speech recognition, offering high accuracy and broad language support. Its open-source nature and ease of use make it an excellent choice for a wide range of applications. Whether you need to transcribe audio, translate speech, or identify languages, Whisper provides a robust solution.

Robust Speech Recognition via Large-Scale Weak Supervision. The model supports multilingual speech recognition, speech translation, and spoken language identification.

Best Alternative Tools to "Whisper"

Supertranslate is an AI-powered platform that converts speech to text, generates subtitles, and translates audio/video content into 125+ languages, making it perfect for reaching global audiences.

LM-Kit provides enterprise-grade toolkits for local AI agent integration, combining speed, privacy, and reliability to power next-generation applications. Leverage local LLMs for faster, cost-efficient, and secure AI solutions.

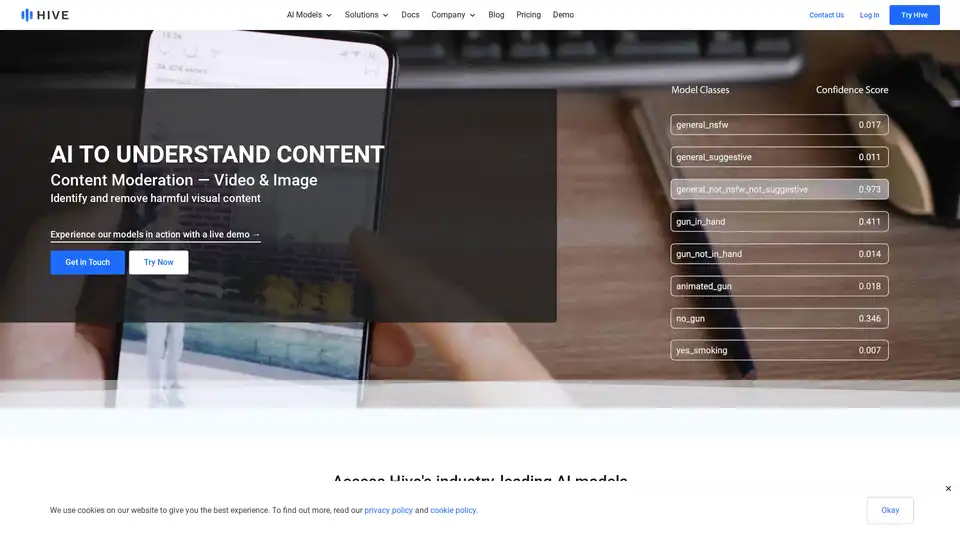

Hive provides cutting-edge AI models for content understanding, search, and generation. Ideal for moderation, brand protection, and generative tasks with seamless API integration.

TranscribeMe provides accurate transcription, translation, data annotation, and AI dataset services using AI and human experts. Get fast, affordable, and customized solutions for legal, medical, and enterprise needs.

Transcri is an AI-powered transcription software to convert audio into text and generate subtitles for your videos. Supports 50+ languages. Start for free!

AI Phone translates phone, voice, and video calls in real time in 150+ languages using AI. Works with WhatsApp and other apps. Translate phone calls in real-time - speak your language, they hear theirs.

SpeechBrain is an open-source toolkit for conversational AI, designed to accelerate research and development. It supports speech recognition, enhancement, text-to-speech, and more. Easy to install and customize.

CSC Voice AI transforms Microsoft Teams meetings with real-time, multilingual translation and transcription powered by Azure AI. Supports 24+ languages for efficient international collaboration.

Azure AI Speech Studio empowers developers with speech-to-text, text-to-speech, and translation tools. Explore features like custom models, voice avatars, and real-time transcription to enhance app accessibility and engagement.

Ultravox is a next-gen Voice AI platform designed for scale. It uses an open-source Speech Language Model (SLM) to understand speech naturally, offering human-like conversations with low latency and cost.

TTS-Voice-Wizard converts speech to text for VRChat avatars, sending text as OSC messages. Supports multiple voices, translations, and integrations.

Lingvanex provides AI-powered speech and translation solutions for businesses. Translate text, documents, audio, and images into 100+ languages. Secure on-premise options available.

Lingvanex offers AI-powered speech and translation tools for businesses. Translate text, documents, audio, and images into over 100 languages with options for on-premise solutions and a Translation API.

WiseTalk is a voice-activated AI assistant powered by ChatGPT, offering real-time help, voice translation, and proofreading. It utilizes speech-to-text and text-to-speech for intuitive voice-driven conversations.